Warriors, Cavaliers owners buy into ‘League of Legends’ series

As eSports has grown into the arena-filling behemoth it is today, traditional sports has been clamouring for a stake. Talent has been snapped up, tournaments established, and multi-million dollar investments made. The trend looks set to continue with news that two of the NBA’s biggest rivals are jumping on the competitive gaming bandwagon. ESPN is reporting that the Cleveland Cavaliers have nabbed a spot in the North American League of Legends Championship (LCS).

They join the Golden State Warriors, whose owner Joe Lacob (along with son Kirk) splurged $13 million on an LCS franchise last week. That’s the asking price for a new spot in the championship, whereas existing teams must fork out $10 million. And, if the latest reports are to be believed, FlyQuest (the team backed by Milwaukee Bucks co-owner Wes Edens) will be shelling out that sum for a permanent place in the LCS next year.

It may sound like the NBA is taking over, but it’s left room for others to squeeze into. On Thursday, the New York Yankees acquired a stake in Echo Fox, the eSports franchise owned by NBA alum Rick Fox. The deal forms part of a bigger partnership with Vision eSports, giving the Yankees a stake in an “ecosystem” of eSports properties, including a stats company and a content production outfit. On the flip side, the investment should help toward Echo Fox’s franchise fee too.

The Yankees have announced a new partnership with Vision Esports to help create and manage an “ecosystem” of esports properties. pic.twitter.com/gARezbFVtL

— New York Yankees (@Yankees) October 19, 2017

Source: ESPN (1), (2), New York Yankees (Twitter)

Designing the technology of ‘Blade Runner 2049’

This article contains spoilers for ‘Blade Runner 2049’

There’s a scene in Blade Runner 2049 that takes place in a morgue. K, an android “replicant” played by Ryan Gosling, waits patiently while a member of the Los Angeles Police Department inspects a skeleton. The technician sits at a machine with a dial, twisting it back and forth to move an overhead camera. There are two screens, positioned vertically, that show the bony remains with a light turquoise tinge. Only parts of the image are in focus, however. The rest is fuzzy and indistinct, as if someone smudged the lens and never bothered to wipe it clean.

Before leaving the room, K asks if he can take a closer look. The blade runner — someone whose task it is to hunt older replicants — dances over the controls, hunting for a clue. As he zooms in, the screen changes in a circular motion, as if a series of lenses or projector slides are falling into place. Before long, K finds what he’s looking for: A serial code, suggesting the skeleton was a replicant built by the now defunct Tyrell Corporation.

Throughout the movie, K visits a laboratory where artificial memories are made; an LAPD facility where replicant code, or DNA, is stored on vast pieces of ticker tape; and a vault, deep inside the headquarters of a private company, that stores the results of replicant detection ‘Voight-Kampff’ tests. In each scene, technology or machinery is used as a plot device to push the larger narrative forward. Almost all of these screens were crafted, at least in part, by a company called Territory Studios.

The London-based outfit is known for developing on-set graphics. These are screens, or visuals, that the actor can see and, depending on the scene, physically interact with during a shoot. They have the potential to raise an actor’s performance while creating interesting shadows and reflections on camera. Each one also gives the director more freedom in the editing room. If you have a screen on set, you can shoot a scene from multiple angles and freely compare them during the edit. The alternative — tailoring bespoke graphics for specific shots — is a time-consuming process if the director suddenly decides to change perspective in a scene.

Territory has worked on a bevy of science-fiction films including Ex Machina, The Martian and Guardians of the Galaxy. One of its earliest and most prolific projects was Prometheus, the divisive Alien prequel directed by Ridley Scott in 2012. The team was hired to design the computers and screens inside the titular spaceship, which is ultimately overrun by an alien virus. The bridge, the medical area, the ship’s escape pods — Territory designed them all. In post, the company also handled the crew’s hypersleep chambers, medical tablets and the HUD system that wraps around their POV helmet-cam feeds.

During the project, Territory worked with Paul Inglis, the film’s senior art director, and Arthur Max, the production designer. Years later, David Sheldon-Hicks, co-founder and creative director at Territory, was talking on the phone with Max about Alien: Covenant. Instead, Max suggested that he reach out to Inglis about Blade Runner 2049. “So I dropped him an email,” Sheldon-Hicks recalled, “and said, ‘If you’re on the project I think you’re on, I will give you my right arm to put us on there.’” Inglis laughed and told him that unfortunately, Territory would have to go through a three-way bid for the contract.

It was a big moment. The original Blade Runner is considered by many to be the greatest sci-fi film ever released. Directed by Scott in 1982, it stars Harrison Ford, fresh off The Empire Strikes Back, as retired police officer Rick Deckard. He’s forced to resume his role as a blade runner, tracking down a group of replicants who have fled to Earth from their lives off-world.

Blade Runner is a beautiful noir film filled with rain and neon lights. Based on the Philip K. Dick novel Do Androids Dream of Electric Sleep, it explores some heavy themes, such as what it means to be human, the importance of memories and how our obsession with technology could lead to societal and environmental decay. Critics had mixed reactions upon its release, but over time, the film’s reputation has grown to the point where it’s now considered a classic.

Blade Runner 2049 was, therefore, a huge creative gamble. Territory was awarded the contract in March 2016, before director Denis Villeneuve had released his award-winning sci-fi movie Arrival. The French Canadian was highly regarded, however, for his work on Prisoners, Enemy and Sicario. He had proven his ability to make powerful, thoughtful and visually stunning movies. Still, the stakes were enormous. So much time had passed since the original Blade Runner, and so many movies had riffed or expanded upon its ideas. To succeed, Blade Runner 2049 would need to be something special.

Peter Eszenyi was Territory’s creative lead on Blade Runner 2049. He joined the company in 2011 to help Sheldon-Hicks with some idents for Virgin Atlantic’s in-flight entertainment system. Eszenyi quickly moved on to movies, however, helping the team create computer screens, drone footage and satellite imagery for the 2012 political thriller Zero Dark Thirty. He’s since worked on Guardians of the Galaxy, Marvel’s Avengers: Age of Ultron and the live-action adaptation of Ghost in the Shell, to name just a few.

Peter Eszenyi, Territory Studio’s creative lead on Blade Runner 2049.

Peter Eszenyi, Territory Studio’s creative lead on Blade Runner 2049.

The company’s work on Blade Runner 2049 started with a few cryptic calls. They were “terribly hard,” Eszenyi recalled, because the film’s producers were so secretive about the project. Territory was given a vague list of screens, or sets, that the studio thought they could help with. One line just read “K Spinner,” for instance. But when Eszenyi asked for more information, the answer would always be the same: “No” or “We can’t tell you.” Despite the lack of information, Territory started working on mood boards, trusting that some eventual feedback would steer them in the right direction.

Inside the company, Eszenyi and Sheldon-Hicks were joined by creative director Andrew Popplestone, producer Genevieve McMahon and motion designer Ryan Rafferty-Phelan. (The team would scale up to 10 during the project, but these five were the core.) Together, they started looking for inspiration. The film’s producers had given them one critical detail about the world: a massive, cataclysmic event had occurred since the previous film, wiping out most forms of modern technology. Blade Runner 2049 would still feature computers and screens, however. It was, therefore, Territory’s job to help figure out what that meant and what everything would look like.

Inspiration came from all sorts of places. “It might be something you see in a shop window,” Popplestone said. “You might be walking around here and see a piece of furniture that’s made out of glass, or a sculpture, something like that.” The team found a lot, unsurprisingly, online. They scoured Pinterest and other sites for interesting sculptures and photography. Slowly, they curated their images into themes, or ideas, that could be organized as Pinterest boards. The team would then get together and chat face-to-face, discussing their ideas before breaking off and pulling together more reference points.

“I vividly remember debating bacteria,” Eszenyi said. “‘Can they use certain types of bacteria to create green colors. Or blue ones?” They thought about jellyfish that often wash ashore and turn everything a startling shade of blue. Could they be harnessed somehow to create a primitive color display? How would that work? At one point they were imagining bacteria that could be genetically engineered to change color. They thought about computers that could excite them to trigger a color-switch, thereby altering the image. But then there was the screen. “Would this display be fast enough to be usable?” Eszenyi asked. “Or would it be a slow-changing kind of thing?”

A month later, four of the Territory team visited Budapest, Hungary, where most of Blade Runner 2049 was being shot. For Eszenyi, it was a surreal experience. He grew up in Hungary and remembers watching Blade Runner in secondary school. In particular, he recalled the sweeping, electronic score by Vangelis and his literature teacher gushing over the ending with replicant Roy Batty, played by Rutger Hauer.

David Sheldon-Hicks, co-founder and creative director at Territory Studios.

David Sheldon-Hicks, co-founder and creative director at Territory Studios.

With mood boards in hand, the Territory team were guided through studio security and into a meeting room with a table and a TV at the far end. It was completely empty, so the group started chatting amongst themselves. Then, suddenly, people started shuffling in. “We didn’t realise that, one, we’d meet Denis, or that he’d be there,” Popplestone recalled, “and, two, that it was going to be the entire visual effects team, and the producers as well.” Ten or 12 people in total took a seat. Then they all turned and looked at Popplestone. “So I was like, ‘Okay then!,’” he recalled. “Here’s what we’ve got…”

But the team needn’t have worried. Denis was warm but direct with his feedback. If something caught his eye, he would probe Territory about its meaning and how the group might develop the idea further. “It was always, ‘I like *this* because of *this*,’” Eszenyi said. “What would you want to do with this? Where do you want to take it from here?” Some concepts he dismissed immediately, however. Eszenyi, for instance, liked an artist who had drawn illustrations for the Soviet-era space program. Beautiful illustrations of quiet, analog vessels from the 1970s and ’80s. But they didn’t match up with Villeneuve’s vision.

The director disliked anything that felt too modern or sophisticated. If you could imagine it in a Marvel movie, for instance, he wasn’t interested. But if it looked optical, like a microscope or a projector, he took notice. Glass, lenses and harsh lighting. Villeneuve also leaned toward nature; images that felt organic and abstract. “The whole point of the story is that we don’t have digital-based technology,” Popplestone said. “So he wanted something that was completely removed from that.”

Before heading home, Territory visited the art department on set. The team was also given permission to step inside production designer Dennis Gassner’s room, which was filled with concept art and storyboards. At last, the group felt like they had a good grasp of the movie and the world Villeneuve was trying to build.

Back in England, Territory refined its ideas. At its Farringdon office, the team experimented with physical props and filming techniques. They tried shooting through a projector to see how different lenses would warp the final image. The group took macro photographs of fruit, including a half-eaten grape that someone had left in the office. Eszenyi even looked at photogrammetry, a technique that uses multiple photographs and specialized algorithms to build 3D models. It’s been used before to recreate real-life locations, such as Mount Everest, in VR and video games.

Territory Studios’ creative director Andrew Popplestone.

Territory Studios’ creative director Andrew Popplestone.

“It was almost like being back at university again,” Popplestone said. The group operated like art students, experimenting with techniques that might produce abstract images or textures. A meeting room was eventually dedicated to the project, which the film’s producers had code-named Triboro. “We just gave up on meetings,” Sheldon-Hicks said. “The project took priority.”

Eszenyi also became quite friendly with his local butcher. An assortment of “meat-based stuff,” including pig’s eyes started to gather in the office fridge, much to Sheldon-Hicks’ displeasure. “I was like, ‘Seriously, I’m getting takeaway for the next few weeks. I’m not going in there. It’s horrible,’” he recalled with a chuckle.

Blade Runner 2049 was challenging because it required Territory to think about complete systems. They were envisioning not only screens, but the machines and parts that would made them work.

With this in mind, the team considered a range of alternate display technologies. They included e-ink screens, which use tiny microcapsules filled with positive and negatively charged particles, and microfiche sheets, an old analog format used by libraries and other archival institutions to preserve old paper documents. When the group was ready to present its new ideas, it was Inglis, rather than Villeneuve, that looked everything over and provided feedback. Inglis was working closely with the director and was, therefore, familiar with his ideas and preferences.

Slowly, Territory narrowed its focus. The team started shaping its abstract ideas into assets, or screens, that could be formally presented to Inglis and the rest of the film’s producers. Around this time, the studio gained proper access to the art department and received a full breakdown of the work that needed to be completed. The team switched to Adobe Photoshop and Illustrator for its designs, applying animation in After Effects and professional 3D modelling software Cinema 4D.

“As soon as anything got too clean, or too fine, it was instantly going down the wrong direction,” Popplestone said. The team created and curated libraries of textures and optical, line-based layers inspired by its real-world experiments. Distortion, warping and other artificial techniques were used to give the screens a grubby but beautiful look.

Territory was eventually given permission to read the script. The team had to fly to Hungary, however, to skim through the pages in an isolation chamber. “I had roughly half an hour to read the script,” Eszenyi recalled. As such, he only had a rough idea of how the different sets and story sequences fitted together. Back in London, the team would constantly ask each other what they remembered from their brief time with the script. Thankfully, Inglis was always available to confirm anything they had forgotten.

“He was the arbiter of all information,” Popplestone said.

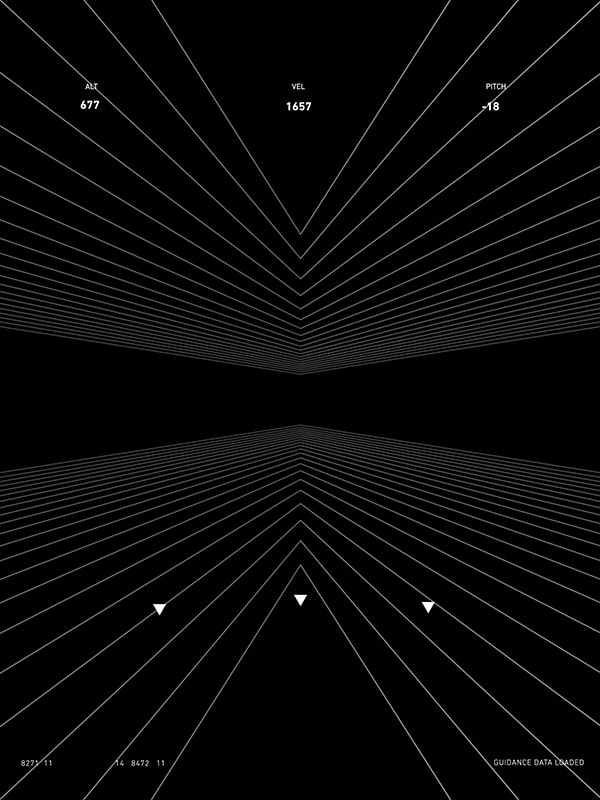

Near the end of the film, Deckard is handcuffed and bundled into a large spinner, which the team calls the Limo. It’s owned by Wallace Corporation and is, therefore, a luxurious vehicle. Up front, barely in shot, you can see the pilot and a few screens with monochromatic designs. They’re simple, sophisticated screens, conveying information with minimal dots and triangles.

That same design language can be seen inside the rest of the Wallace Corporation. It’s a sparse but immediately recognisable look. Territory’s goal was to build something that felt like Wallace’s own, personalized operating system. So specialized, in fact, that Wallace wouldn’t require the usual labels and iconography found on mass-market platforms like Windows and MacOS. It was designed for him, and is, therefore, supposed to be an extension of his tastes.

Wallace’s employees, of course, aren’t Wallace. So the implication is that everyone inside the company is using an operating system designed for someone else. “It speaks of corporate arrogance and confidence,” Sheldon-Hicks said. “And a power that is beyond needing to worry about the masses.”

The LAPD is a little different. K reports to Lieutenant Joshi, played by Robin Wright. The monitors in her office are chunky and the screens have a blue tinge to them. They’re functional and better than what most of the public has access to, but a far cry from what Wallace Corporation uses. It’s a reflection of how law enforcement and emergency services are run currently. The UK’s National Health Service, for instance, still uses Windows XP. Police often have to wait to acquire new technology for their department.

Layering that context into screen designs can be tricky. The technology had to look outdated for 2049, but given the time period, also relatively futuristic. “It’s old technology compared to Wallace,” Popplestone explained, “but it’s still advanced for us. So we had to make it look modern and more advanced than what we’ve got, yet still somehow slightly knackered and dilapidated.”

Territory also had to be mindful of the original film and the off-screen events that Villeneuve had envisioned between 2019 and 2049. It was a relatively straightforward task; the sheer length of time and the cataclysmic event (partly explored in the Black Out 22 short by Shinichiro Watanabe) meant there was little the team had to reference or honor. That was by design. Villeneuve wanted a world “reset,” so everyone on the project could freely explore new ideas. The film has Spinners, rain-soaked cities, and Deckard’s iconic blaster, but otherwise there’s little in the way of technological tissue.

“It was a completely clean slate,” Eszenyi said.

Almost every screen Territory produced serves a specific purpose in the story. They help K uncover a new clue, or learn something interesting about another character. But each one also says something more about the world of Blade Runner 2049. What’s common or unusual for people in different jobs and social classes. They hint at the state of the economy, the rate of innovation and how the development of artificial intelligence — replicant and otherwise — is affecting people’s relationships and behavior with technology.

“It’s a much more subtle, contextual narrative,” Popplestone said.

Take the market. Partway through the movie K stands in the middle of a square, contemplating a series of photos. The film is focused on these images, but in the background you can see large, illuminated food adverts. They’re square in shape, doubling as buttons that dispense orders like a giant gumball machine. Up above, animated banners advertise Coca-Cola and other food and drink products. It’s one of the few times Territory designed graphics that didn’t have a specific story function. They’re still a point of interest, however, providing a rare look at how people live in this future version of Los Angeles.

Territory also had to think about how its screens would look in relation to the camera. Some were filmed up close, while others were only visible in the background. It was important, therefore, that designs were readable at different distances. To test this, the team constantly squashed and scaled up its graphics to see what they would look like on screen. “Does it have the detail to have a close lens on it? And can you go wide, and blur it out, and still read it?” Sheldon-Hicks said.

When a computer or machine is shown on film, it needs to be believable. Sometimes, a static display will do. But others require animation and multiple screens, or loops, to be chained together. Early in the movie, for instance, K steps into his personal Spinner. The screens lining the dashboard change as a call from Joshi comes in, and K scans the eyeball of a replicant he was hunting earlier. These are subtle, but necessary transitions to sell the idea that the vehicle is real.

Every shot was different, but generally Territory provided screens with an initial state, an action state, and then a looping state. Some screens had additional action states, if they were required to pull off a particular sequence. The different states were then triggered by actors or production staff on cue.

Territory could, in theory, design and code full-blown applications. But for a movie like Blade Runner, that would be a costly and time-consuming process. After all, a screen is largely redundant once the scene has been shot. There are also the practicalities of shooting a movie. An actor’s focus is already split between the lights, the camera, the lines they need to remember, and the positioning of other cast members. If a screen or prop isn’t simple, it could affect their focus and the overall quality of the performance.

Territory’s graphics also have to serve the dialogue, changing with a certain rhythm or when particular lines are delivered. When Luv was looking for K’s location, for instance, there needed to be a search tool, followed by a map that clearly showed his whereabouts. In the real world, you would probably get the following confirmation or prompt in Google Maps: “by The Cosmopolitan, did you mean…?” In a film, however, where pacing is critical, these intermediary screens are unnecessary and detract from the film’s entertainment value.

“There are these push and pull factors of narrative versus reality,” Sheldon-Hicks said. “You don’t want to completely break away from reality. So we’re always treading this line, or threading this needle on set in quite a tricky way.”

Territory sent Rafferty-Phelan to Hungary to provide support while the movie was being filmed. There, he could answer questions and make last-minute changes required by Villeneuve or anyone else on set. These are normally small: sometimes the lighting is different than the team expected, or the director asks if some text can be adjusted. If the edits are minor, they can often be done on location by a member of the Territory team, avoiding difficult delays in shooting or expensive tweaks in post.

For Sheldon-Hicks, there’s another reason to send his employees out on location. They’re building a relationship with the director, who might want to work with them again in the future. It’s also an opportunity for the company to collaborate and learn from some of the best creative talents in the industry. “It’s like free training for me,” he said. “I’m being paid to send my team out and see how Scott or Villeneuve tells a story. Of course I’m going to send them out.” The more talented and experienced Territory becomes, the more likely it is to win contracts in the future.

Territory strives to deliver screens that can be shot with a camera on set. But there’s always a chance something will need to be changed in post. Some films require extensive reshoots long after Territory has wrapped up its work on set. Other times, the film requires a particular look, or flourish, that simply isn’t possible with current technology. Every project is different. On The Martian, for instance, Scott was able to shoot almost everything in camera. “The whole thing just went through in lens, done,” Sheldon-Hicks recalls. Ex Machina, directed by Alex Garland, was the same.

Territory has been hired in the past to work on films, such as Ghost in the Shell, while they were in post-production. That means delivering concepts or assets that can be added to the movie after shooting has wrapped. With Blade Runner 2049, however, the company’s work was finished once the cameras had stopped rolling. The team provided some resources so that other companies could tweak their work in post, but otherwise, its work was done.

Handing over control can be difficult, but it’s all part of the filmmaking process. “There’s just nothing you can do about it,” Sheldon-Hicks said. “You know that they’re all working to make the work better, and you don’t want your graphics to look beautiful but be in a movie that sucks. So we all kind of accept that.”

Eszenyi is “pretty sure” that parts of the morgue sequence were changed in post. It was a highly choreographed scene, with multiple props and screens, so the odds of a post-shot tweak were higher than other scenes in the movie. Still, it gave the actors real, visual cues to act off, and a basis for the graphical adjustment in post. So it’s not like Territory’s efforts were wasted. Even so, the team felt a mixture of emotions when they watched the first trailer in December last year. “It’s like, yeah, that’s my kid,” Eszenyi explained, “but she’s not two years old anymore, she’s 18.”

Blade Runner 2049 is a beautiful movie. The gloom of downtown Los Angeles and the harsh, radioactive wasteland of Las Vegas clash with the design decadence of Wallace Corp and the steely cold of K’s apartment. The film’s visual prowess can and should be attributed to cinematographer Roger Deakins and everyone who worked on the sets, costumes and visual effects. Territory’s contributions can’t be understated, however. By blurring the line between technological fantasy and reality, the team has made it easier to believe in a world filled bioengineered androids. Which is pretty cool for any fan of science fiction cinema.

Images: Alcon Entertainment (Blade Runner 2049); Nick Summers (photography)

U.S. Senators Ask Apple Why VPN Apps Were Removed From China App Store

Two U.S. senators have written to Apple CEO Tim Cook asking why the company removed third-party VPN apps from its App Store in China (via CNBC). Reports that Apple had pulled the VPN apps first arrived in July, following regulations passed earlier in the year that require such apps to be authorized by the Chinese government.

In the open letter dated October 17, Senators Patrick Leahy and Ted Cruz write that China has an “abysmal” human rights record when it comes to freedom of expression and free access to online and offline information, and say they are “concerned that Apple may be enabling the Chinese government’s censorship and surveillance of the internet”.

Senators Ted Cruz (R-Texas, left) and Patrick Leahy (D-Vermont)

“While Apple’s many contributions to the global exchange of information are admirable, removing VPN apps that allow individuals in China to evade the Great Firewall and access the internet privately does not enable people in China to ‘speak up’.”

“To the contrary, if Apple complies with such demands from the Chinese government it inhibits free expression for users across China, particularly in light of the Cyberspace Administration of China’s new regulations targeting online anonymity.”

The senators go on to note that Cook was awarded the free speech award at Newseum’s 2017 Free Expression Awards, where he said: “First we defend, we work to defend these freedoms by enabling people around the world to speak up. And second, we do it by speaking up ourselves.”

In the bipartisan request, the senators then ask Cook to explain Apple’s actions by answering a list of questions, including whether Apple was personally asked to remove the VPN apps by Chinese officials, and if the company expressed its concerns to the Chinese authorities before the country’s anti-freedom laws were enacted.

In addition, the senators question what Apple has done to promote free speech in China and whether it has pushed for human rights and better treatment of oppressed groups in the country.

During an earnings call, Cook spoke about his decision to remove the VPN apps. “We would rather not remove apps, but like we do in other countries, we follow the law where we do business.” Cook went on to say that he hopes China will ease up on the restrictions over time.

Apple has yet to respond to the letter.

Note: Due to the political nature of the discussion regarding this topic, the discussion thread is located in our Politics, Religion, Social Issues forum. All forum members and site visitors are welcome to read and follow the thread, but posting is limited to forum members with at least 100 posts.

Tags: App Store, China, VPN

Discuss this article in our forums

Google Follows Apple’s Lead By Reducing Play Store Fee for App Subscriptions

Google revealed on Thursday that it would follow Apple’s lead in lowering the amount of money app developers must pay for mobile subscriptions processed through the company’s Play Store (via The Verge).

Adoption of the subscription model by iOS developers has increased over recent months, causing some controversy within the app-using community. Apple incentivized developers to sell their apps for a recurring fee instead of a one-time cost when it made changes to its App Store subscription policies in September of last year.

Usually, Apple takes 30 percent of app revenue, but developers who are able to maintain a subscription with a customer longer than a year see Apple’s cut drop down to 15 percent.

Google is adopting the same policy for subscriptions in its Play Store – an Android developer selling a subscription service will be eligible for the cut if the customer in question has been subscribed for more than a year. The company plans to bring the change into effect starting January 2018.

As The Verge notes, Google is trying to stay competitive with Apple by offering a reduction in its fees. This way the company ensures that subscription services like Spotify don’t try to bypass the Play Store in an effort to avoid paying the fee. But it also encourages developers to work harder to keep users subscribed for longer, given that the free reduction doesn’t take effect until 12 months into the initial subscription.

Tags: Google, Play Store

Discuss this article in our forums

Watch Jeff Bezos smash champagne atop a wind turbine to launch a new wind farm

Why it matters to you

With energy demands on the increase, wind farms like this can help take care of some of this need.

He founded ecommerce giant Amazon and the ambitious space company Blue Origin, and now owns the Washington Post, so if Jeff Bezos wants to bless his new wind farm by standing atop a turbine and smashing a bottle of champagne on it, that’s fine by us.

Fun day christening Amazon’s latest wind farm. #RenewableEnergy pic.twitter.com/cTxeXdsFop

— Jeff Bezos (@JeffBezos) October 19, 2017

The wind farm in question is in Texas and is, according to Amazon, the company’s largest renewable energy project to date. Bezos posted a video of his bottle-smashing exploits on Twitter. The footage begins close up to the Amazon CEO before moving away to reveal the vast expanse of the wind farm. It also makes you realize just how mind-bogglingly massive one of those wind turbines actually is, or just how tiny Jeff Bezos is. We’re guessing the former.

Located in Scurry Country, Amazon’s latest wind farm includes 100 turbines capable of providing enough clean energy to power 330,000 homes. The company said these projects also help to support “hundreds of jobs and provide tens of millions of dollars of investment in local communities across the country.”

Kara Hurst, Amazon’s worldwide director of sustainability, described the company’s investment in renewable energy as a “win-win-win-win — it’s right for our customers, our communities, our business, and our planet.”

The company has so far launched 18 wind and solar projects across the U.S., with 35 more under development. “These are important steps toward reaching our long-term goal to power our global infrastructure using 100 percent renewable energy,” Hurst said.

Alongside its investment in renewable energy, Amazon is also focusing on sustainability, evidenced by its Frustration-Free Packaging program that helped to eliminate more than 55,000 tons of packaging in 2016.

Amazon’s announcement of its latest wind farm comes just a few days after Greenpeace criticized it for being “one of the least transparent companies in the world in terms of its environmental performance,” claiming it refuses to report its greenhouse gas footprint, “nor does it publish any restrictions on hazardous chemicals in its devices or being used in its supply chain as other leading electronics brands provide.”

Even if Greenpeace is unimpressed by Bezos’s smashing video, hopefully it’ll at least welcome this latest renewable energy effort from the company.

Editor’s Recommendations

- Chase the wind with our guide to kitesurfing equipment for beginners

- The cryptocurrency from this wind-powered mining rig helps fund climate research

- What It’s Like To Play VR Racer Radial-G In A Wind Tunnel

- Virtual reality racing in an 80 mph wind tunnel is cheek-flapping good fun

- Australia will build a solar power plant to meet the government’s energy needs

DHL’s autonomous mail robot is huge and won’t take the jobs of delivery workers

Why it matters to you

Mail workers with dodgy necks and backs would welcome a robot buddy like PostBOT on their rounds.

Delivery giant DHL has invested a great deal of time and money in developing delivery drones, and over the last few years has run several trials targeting isolated communities on small islands and in mountainous regions.

Its latest autonomous effort involves not a flying machine but instead a ground-based robot by the name of PostBOT. If you’re a mail delivery worker, the good news is that PostBOT isn’t out to replace you, rather it wants to act as your buddy, accompanying you on your rounds, carrying all the mail, and, importantly, freeing up your hands so you can deal with letters and packages on the move.

Deutsche Post DHL Group announced this month that it’s ready to start testing electric-powered PostBOT in Bad Hersfeld, a town of about 30,000 people in central Germany.

Designed by French robotics firm Effidence with input from DHL delivery staff, PostBOT is a hefty-looking machine that stands at around 150 centimeters. It holds six mail trays and can carry loads of up to 330 pounds (150 kg), enough weight to vaporize all the discs in your back if you ever attempted to carry all that by yourself. Best leave it to PostBOT.

On-board sensors track the legs of the mail carrier, ensuring both robot and human stay close to one another for the entirety of the round. As you’d expect, those sensors also prevent PostBOT from barreling into obstacles, though it’s a safe bet that any nearby pedestrians will be quick to make space if they see this large and somewhat bulky robot coming their way.

“Day in and day out, our delivery staff performs outstanding but exhausting work,” said Jürgen Gerdes of Deutsche Post DHL. “We’re constantly working on new solutions to allow our employees to handle this physically challenging work even as they continue to age.” And with Germany a nation of four seasons, PostBOT has been built to handle all weather conditions, ensuring year-round operation.

Gerdes said many staff are already making use of e-Bikes and e-Trikes for mail deliveries, while the six-week PostBOT trial is expected to offer “important insights into how we can further develop the delivery process for our employees.”

Ground-based delivery robots have been getting increasing exposure in recent years, though up to now most of them have been concerned with grocery and fast-food orders. As with drone technology, one of the main obstacles to their implementation are local authorities that need convincing of their reliability and safety. Steve the mall-based security robot, for example, recently proved that some designs clearly aren’t quite ready.

Editor’s Recommendations

- New van from Ford, DHL shows electric powertrains aren’t just for passenger cars

- ‘Delivery chutes’ are Amazon’s latest idea for its drone delivery service

- TWB Podcast: Galaxy vs. iPhone vs. Pixel, Icelandic Drones and Elon’s Spacesuit

- In Yeti’s innovation labs, tough outdoor gear is born (and beaten senseless)

- Hard-earned tips you’ll need to take back Earth in ‘XCOM 2: War of the Chosen’

Google will pay hackers who find flaws in top Android apps

Google is probably hoping to raise the quality of apps in the Play store by launching a new bug bounty program that’s completely separate from its existing one. While its old program focuses on finding flaws in its websites and operating systems, this one will pay hackers when they find vulnerabilities in Android’s top third-party apps. They have to submit their findings straight to the developers and work with them before they can turn in a report through HackerOne’s bounty platform to collect their reward.

Google promises $1,000 for every issue that meets its criteria, but bounty hunters can’t simply choose a spammy app (of which there are plenty on the Play Store) to cash in. For now, they can only get a grand if they can find an eligible flaw in Dropbox, Duolingo, Line, Snapchat, Tinder, Alibaba, Mail.ru and Headspace. Google plans to invite more app developers in the future, but they have to be willing to patch any vulnerabilities found… which means you can’t rely on the program to fix the issues in your favorite low-quality application.

Via: Android Police

Source: HackerOne

Pioneer and Canada partner to ensure musicians get paid for DJ play

Pioneer DJ wants to make sure electronic artists get paid for the remixes you hear at the dance club. The company’s Kuvo entertainment service has partnered with Canada’s performing rights organizations (PRO) and the Society of Composers, Authors and Music Publishers of Canada (SOCAN) to beam music metadata into other PROs, according to a press release. Apparently this won’t cost DJs or venues a thing, either.

“After testing Kuvo extensively at one of Canada’s largest electronic music nightclubs, Coda in Toronto, SOCAN has welcomed the implementation of the service at any venue in the country wishing to use it,” the statement reads.

Given how much music DJs put into a set and the very nature of the mashups they create, ensuring everyone gets their fair share of royalties can be pretty difficult. KUVO seems like a pretty elegant way of addressing that.

Club goers stand to benefit as well. If you hear a song on a night out and don’t want to deal with how flaky Shazam can be, if you have the Kuvo app installed on your phone, you’ll be able to see what’s playing.

The service is already being used in Austria, Belgium, Spain and the UK. This marks its first implementation in North America.

Source: Businesswire

‘The Walking Dead’ VR scene puts you in the shoes of a walker

Would you submerge yourself in a fear-inducing virtual setting overrun by zombies? That’s the world The Walking Dead has expertly crafted during its seven-year run, and now AMC is inviting you to step into it, courtesy of its VR app. You can grab it for iOS, Android, Samsung Gear VR, and Google Daydream right now, but the real fun begins on Sunday. Directly after the show’s 100th episode, the network is dropping an exclusive VR scene.

The immersive experience will put you in the action from both sides. You’ll start off trapped in an abandoned car waiting for help to arrive as the walkers inch ever closer. If that doesn’t sound terrifying enough, you’ll also get to join the herd and feast in the carnage. Once you get your fill of claustrophobic horror, you can peruse the extras, including trailers and features from that other AMC show Into the Badlands. The network is also promising to keep the app stocked with virtual experiences for the foreseeable future.

The AMC VR app follows the announcement of The Walking Dead: Our World — an augmented reality game coming soon to iOS and Android. The two combined should turn you into a regular zombie-slaying survivalist.

In sneak preview, Adobe shows off tech for automatically colorizing old photos

Why it matters to you

Sneak peeks at Adobe’s latest projects offer a rare glimpse at how the company’s products are developed, and what new tools may come in the future.

In what has become a popular tradition at the annual Adobe MAX show, Adobe showed off several sneak previews of in-development technologies that may make their way to future versions of Photoshop, Premiere Pro, Illustrator, and more. For example, Character Animator, which recently came out of beta, was unveiled during a previous MAX show. While all of the projects are interesting in their own right as examples of cutting-edge software tech, a few stand out for photographers and video editors.

Powered by the Adobe Sensei AI engine, Project Scribbler can take a black and white photograph and automatically colorize it with surprisingly realistic results. The program was trained on tens of thousands of images to be able to recognize facial features of a monochrome image and appropriately apply correct colors to different regions of a face, from the hair to the skin to the lips and teeth.

Although Project Scribbler is currently limited to faces — it can’t colorize full-body portraits — it is not limited to photos; it can colorize sketches, as well. In a live demonstration, Adobe showed how it can help an artist ideate a character or do a quick mockup to show a client before diving in and finishing the color by hand.

Sensei was definitely a running theme at MAX this year, and two additional projects are using it to provide a much more robust alternative to Photoshop’s Content Aware Fill option for removing and replacing objects in a scene. Project Scene Stitch draws on deep learning and semantic cues to replace a photo’s foreground with one built from Adobe Stock images, while Project Deep Fill applies similar technology to replace smaller objects within an image. Deep Fill can also reshape objects based on user input, which Adobe demonstrated by sketching a heart line beneath a rock arch which caused the arch to conform to the shape of the sketch.

For video editors, Project Cloak is essentially Content Aware Fill applied to video. It automatically removes objects from a video shot without the user needing to clone out the object on a frame-by-frame basis, and it does it in a way that is much more accurate that per-frame editing.

In a series of examples, Adobe demonstrated the impressive range Project cloak offers, from removing a lamppost to erasing two people from a shot where both the people and the camera were moving. If this technology makes it way into a shipping product (our guess is it would end up in After Effects), it would undoubtedly be a game changer for many editors.

For immersive video creators, Adobe also showed off two projects for working in 360-degree space. Project Sidewinder builds a depth map from stereoscopic 360 video which then creates a convincingly real three-dimensional effect and allows the viewer to change perspective beyond simply rotating, moving from side to side or up and down. When it comes to audio, Project SonicScape offers a visual way to see and reposition audio sources with the spherical space.

Adobe showed off 11 development projects in total that ran the gamut from photography and video to design and 3D modeling and even data visualization. As with past Adobe sneaks, none of the technology demonstrated at MAX is guaranteed to be incorporated into a commercially available product, but the projects do offer a very real look at what Adobe is looking into and the types of tools we can expect to see in the not-too-distant future.

Editor’s Recommendations

- ‘Stylized Facial Animation’ project turns video into realistic-looking hand-drawn art

- Adobe launches new programs and updates to old favorites at 2017 MAX conference

- Adobe’s new Lightroom leverages the cloud for cross-platform photo editing

- Photoshop 2018 now supports 360-degree photos, adds new design tools

- Custom fonts in Photoshop? The feature is coming soon with variable typography